Best AI for Financial Modeling: Excel Comparisons (2026)

Compare the top 5 AI tools for Excel financial modeling. See which delivers audit-ready formulas for real estate underwriting versus "black box" answers.

If you are a real estate analyst, the following scenario is likely familiar: It is 11:00 PM, and a Managing Director sends over a 120-page Offering Memorandum (OM) with a request for a "quick" back-of-the-envelope valuation by morning.

In 2024, this meant hours of manual data entry—copy-pasting rent rolls, re-typing T-12 expenses, and painstakingly building a pro forma while battling fatigue-induced formula errors.

By 2026, the landscape has shifted. AI tools for Excel promise to automate this grunt work. But for financial professionals, automation brings a new risk: the "Black Box" problem. Most AI tools can give you an answer (e.g., "The IRR is 14%"), but they cannot show you the math. In institutional finance, an unauditable number is a useless number.

This guide compares the Best AI for Excel Financial Modeling in 2026, specifically evaluating them on the criteria that matter to deal teams: auditability, real estate context, and formula transparency.

Introduction to Financial Modeling

Financial modeling is the backbone of modern finance, empowering professionals to forecast outcomes, analyze trends, and make data-driven decisions. For finance teams, the ability to create, analyze, and interpret complex financial data is essential for everything from budgeting to investment evaluation. The rise of AI tools has sparked a fundamental shift in how financial modeling is performed. AI-powered solutions now enable finance professionals to automate repetitive tasks, streamline data analysis, and generate actionable insights with unprecedented speed and accuracy. Instead of spending hours on manual calculations, teams can focus on high-level decision-making and strategic forecasting. As a result, AI tools have become indispensable for finance professionals seeking to create robust models, uncover deeper insights, and drive better business outcomes.

What Makes AI Good for Financial Modeling?

Before ranking the tools, we must define what “good” looks like in a high-stakes investment environment. AI adoption is accelerating across the financial services industry—including banking, insurance, fintech, and asset management—driven by the need for greater efficiency, accuracy, and competitive advantage. Financial services organizations are increasingly leveraging AI for tasks such as financial modeling, analysis, compliance, fraud detection, and reporting, transforming how data-driven decisions are made. A generic chatbot might write a poem, but it cannot underwrite a multifamily acquisition.

1. Formula Transparency vs. Black Box Results

The most critical differentiator. Does the AI give you a static number (hardcoded value), or does it write a dynamic Excel formula (e.g., =SUM(B2:B14)\*1.03)? For a model to be trustable by an Investment Committee (IC) or LP, every output must be traceable.

2. Excel Integration

Does the tool live where you work? It's important to distinguish between agent mode—where embedded AI features operate directly within Excel to automate complex financial modeling tasks—and an excel add in, which is a third-party tool that extends Excel's native functionality. Deep integration and the ability to integrate seamlessly with platforms like Google Workspace or Microsoft Office are also crucial, as they enhance workflow efficiency and collaboration for finance teams. The best tools are native to spreadsheets without going back and forth.

3. Unstructured Data Handling

Financial modeling rarely starts with clean CSVs. It starts with messy PDFs, scanned rent rolls, and unstructured OMs, making it crucial to transform this information into clean data for accurate modeling and reliable analysis. The top AI tools must bridge the gap between “PDF chaos” and “Excel structure.”

The 5 Best AI Tools for Excel Financial Modeling (2026)

1. Apers AI for Excel

Best For: Institutional real estate investors, family offices, boutique investment managers, commercial brokers, and analysts in CRE.

Apers has emerged as the specialized standard for real estate financial modeling. As an AI platform designed for institutional real estate professionals, Apers is architected specifically for the underwriting workflow. It doesn’t just “read” data; it understands real estate logic (e.g., lease expirations, expense ratios, promote structures).

- Key Feature: Formula-First Architecture. Apers doesn’t just output values; it constructs the actual Excel formulas. If you ask it to build a 10-year cash flow, it creates the rows, columns, and dynamic links, allowing you to audit the logic and change assumptions later. Apers automates investment evaluation, pro forma financial modeling, due diligence, reporting, and knowledge capture.

- Pros:

- Extracts data from PDFs (OMs/Rent Rolls) directly into model templates.

- Integrates multiple documents like rent rolls, OMs, and T12s for streamlined financial modeling.

- Features an autonomous modeling engine (XL-2) that processes documents such as PDFs, leases, and spreadsheets.

- Generates auditable, dynamic formulas, not static text.

- “Zero-Training” privacy policy ensures proprietary deal data isn’t used to train public models.

- Cons: Highly specialized for real estate; less useful for generic corporate finance tasks (e.g., inventory management).

- Verdict: The only tool capable of turbo-charging the “Junior Analyst” grunt work while maintaining institutional-grade rigor.

2. Microsoft Copilot for Finance

Best For: Large Corporate Finance Teams & General Excel Users.

Microsoft’s native AI integration is powerful due to its ubiquity. Living inside the Microsoft 365 ecosystem, Copilot has context on your emails and OneDrive files, making it a strong generalist assistant.

- Key Feature: Python in Excel. Copilot can write and execute Python code within Excel cells, which is excellent for advanced statistical analysis or forecasting large datasets that exceed Excel’s row limits. Copilot also integrates with Power BI, enabling interactive dashboards and natural language queries for business intelligence.

- Pros:

- Native integration requires no new software installation.

- Strong at explaining complex formulas in plain English.

- Enterprise-level security compliance defaults.

- Ranked third among financial modeling tools.

- Cons:

- Often outputs static values rather than dynamic formulas for complex modeling.

- Lacks specific real estate domain knowledge (e.g., struggles with messy rent roll PDFs).

- Verdict: An excellent productivity booster for general Excel tasks, but often lacks the precision required for deep-dive underwriting. Copilot performed best in flowthrough of assumptions due to its simplicity, with Shortcut coming second.

3. ChatGPT Enterprise (OpenAI)

Best For: Ad-Hoc Analysis & Coding Custom Macros.

While not a native Excel tool, ChatGPT Enterprise (GPT-5 class models) remains a powerhouse for data analysis. Its “Advanced Data Analysis” feature can process massive files that would crash Excel.

- Key Feature: VBA/Macro Writing. ChatGPT is unmatched at writing VBA scripts to automate repetitive tasks, even if it doesn’t run them directly.

- Pros:

- Extremely flexible; can answer any question about any topic.

- Best-in-class reasoning capabilities for qualitative market research.

- Cons:

- The “Air Gap” Problem: You must export data from Excel, upload excel files to the chat, and paste results back. This kills efficiency and breaks the audit trail.

- Data privacy concerns remain a hurdle for many strict compliance departments.

- Verdict: Ranked fourth among financial modeling tools, ChatGPT is a powerful sidekick for coding and research, but not a modeling tool itself. While its presentation was the least polished, its historical balance sheet was the easiest to audit among the tools tested.

4. Excel Formula Bot

Best For: Beginners & Formula Learning.

One of the early movers in the space, Excel Formula Bot is a focused tool designed to do one thing well: translate text instructions into Excel formulas.

- Key Feature: Text-to-Formula. You type "Calculate the average of column A if Column B is 'Retail'", and it gives you the exact =AVERAGEIF formula.

- Pros:

- Inexpensive and lightweight.

- Great for learning syntax.

- Cons:

- Cannot build full models or structures.

- No document extraction capabilities.

- Verdict: A great learning aid, but insufficient for professional deal analysis.

5. Manual Excel (The Status Quo)

Best For: Final Review & Custom nuances.

We include this not as a tool, but as a benchmark. Even in 2026, "doing it yourself" remains the primary competitor to AI.

- Pros: Total control and zero cost (beyond salary).

- Cons: Slow, error-prone, and creates bottlenecks.

- Verdict: Manual modeling should be reserved for the final 10% of customization, not the initial 90% of build-out.

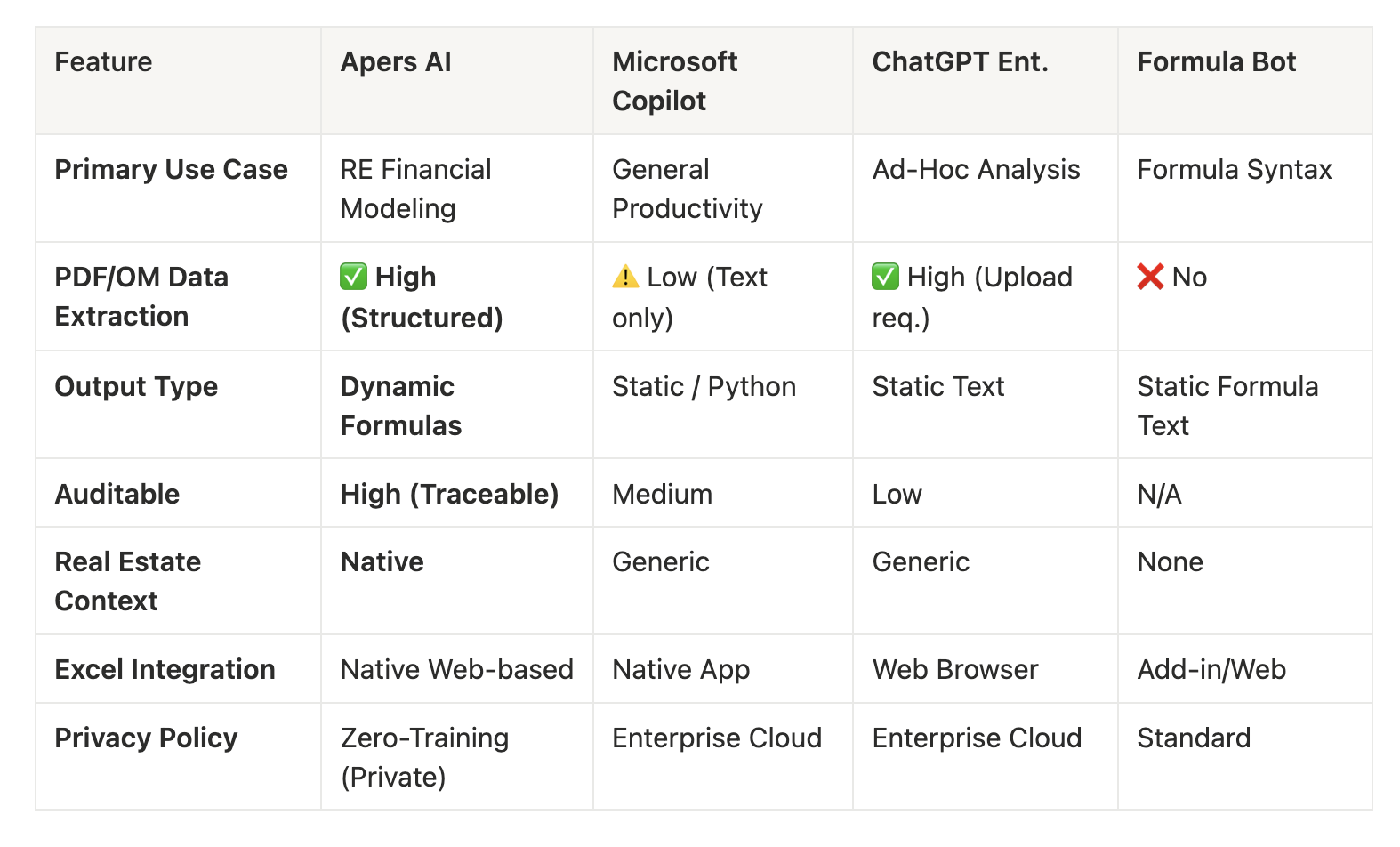

Side-by-Side Feature Comparison

The current landscape for the best AI for financial modeling is best described as a race between large LLMs and specialist tools focusing specifically on Excel and modeling. Most tools automate processes within financial workflows, but only a subset incorporate agentic AI capable of multi-step actions with full auditability and human oversight. For example, DataSnipper is an intelligent automation platform embedded in Excel that helps finance teams extract data and generate audit-ready documentation, showcasing agentic AI in action.

A key differentiator among these tools is the presence of agent mode—an embedded AI feature within Excel that enables automation of complex modeling tasks, such as building detailed financial statements, performing data analysis, and improving workflows directly within the spreadsheet environment. This contrasts with add-in solutions or standalone AI models.

When it comes to forecasting, consensus estimates are often used to generate financial projections, relying on analyst expectations for accuracy in three-statement models. Handling line items accurately, including current and non-current portions of assets and liabilities, is critical for precise financial analysis and auditing.

In terms of performance, Shortcut and Claude significantly outperform Copilot and ChatGPT in building financial models. Shortcut stands out as the only AI tool specifically designed for financial professionals, understanding worksheet structure and formula dependencies. Both Claude and Shortcut asked thoughtful clarifying questions after receiving prompts, resembling good junior analysts. They also produced the most investment-bank-like outputs, while Copilot ignored formatting conventions entirely. However, even the best AI financial modeling tool, Shortcut, underperformed compared to a lower bucket analyst in overall evaluation.

All tested AI tools struggled with circularity in financial models, particularly in forecasting interest income and expense correctly. This highlights the importance of formula transparency—top AI financial modeling tools allow users to trace calculations rather than providing hardcoded values.

AI tools for financial modeling are designed to integrate seamlessly with Excel, allowing users to work within the familiar spreadsheet environment. They automate the creation of complex Excel-based financial models through natural language commands, significantly reducing the time required for tasks such as DCF valuations and LBO analyses. The integration of AI tools into financial workflows is expected to improve efficiency and decision-making in finance teams, helping manage increasing workloads by automating routine tasks and allowing analysts to focus on higher-value activities. Reports indicate that analysts can save 20-30 hours per week on modeling work, and the adoption of AI in financial services is driven by the need for higher accuracy, faster workflows, and the ability to handle increasing regulatory complexity. The integration of AI in financial modeling processes can enhance both accuracy and efficiency, enabling finance professionals to focus on strategic decision-making rather than repetitive tasks.

Building Audit Ready Financial Models

Creating audit-ready financial models is a non-negotiable standard for finance teams, especially when accuracy and compliance are paramount. AI-powered financial modeling tools are transforming this process by automating data extraction from diverse sources, generating transparent formulas, and maintaining full audit trails. These features ensure that every figure in your financial statements is traceable and verifiable, reducing the risk of errors and circular references that can undermine confidence in your analysis. With AI tools, finance teams can build models that are not only robust and reliable but also ready for scrutiny by auditors, stakeholders, and regulatory bodies. This audit-ready approach supports better decision-making and instills trust in the financial data that drives your business forward.

Formula vs. Black Box: Why It Matters for Due Diligence

The “Black Box” problem is the primary reason many investment firms hesitated to adopt AI in 2024-2025.

Imagine you present a deal to your Investment Committee. The CEO asks, “Why is the Year 3 NOI growth 4.5%?”

- With “Black Box” AI: You have to say, “That’s the number the AI gave me.” (Result: You lose credibility instantly).

- With Auditable AI (Apers): You click the cell. You see =F12\*(1+Assumption!C4). You can trace that Assumption!C4 links to a specific inflation toggle you set, and each data point is linked to its original source for full transparency and auditability. Robust reporting features also support compliance and regulatory requirements, ensuring your process meets financial, ESG, SOX, and audit reporting standards.

The Rule of Thumb: If the AI gives you a fish (a number), it’s a calculator. If it gives you a fishing rod (a formula), it’s a modeler. For professional finance, always choose the fishing rod.

Common Challenges

While AI-powered financial modeling tools offer significant advantages, finance teams often encounter common challenges during implementation. Selecting the right tool is critical, as each platform varies in capabilities and suitability for specific financial modeling needs. Seamless integration with existing workflows—whether in Excel, Google Sheets, or other systems—is another hurdle, as disruptions can lead to inefficiencies or errors. Data quality remains a persistent concern; even the most advanced AI tools require clean, accurate data to deliver reliable results. Teams must also be vigilant about potential errors and biases introduced during modeling. By proactively addressing these challenges, finance teams can ensure that their chosen AI tools enhance, rather than hinder, their financial modeling processes.

Decision Framework: Which Tool Should You Choose?

Use this simple logic flow to decide:

- Do you work in Real Estate (PE, Development, Brokerage, Debt)?

- YES: Choose Apers. It is the only tool that understands rent rolls, OMs, and waterfall structures natively, and is specifically designed to enhance financial analysis and support comprehensive three statement models (income statement, balance sheet, cash flow) for better decisions in commercial real estate.

- NO: Go to question 2.

- Do you need to analyze massive datasets (1M+ rows) or write Python?

- YES: Choose Microsoft Copilot. Its Python integration is superior for heavy data science tasks and it can handle scale, making it ideal for processing large volumes of financial data.

- NO: Go to question 3.

- Are you just looking to fix a specific formula error?

- YES: Choose Excel Formula Bot. It’s cheap and effective for syntax checks.

When choosing the best AI for financial modeling, remember that supporting robust financial analysis, the ability to scale, and handling three statement models are crucial for making better decisions. Shortcut is ranked as the best overall AI tool for financial modeling, with Claude as a close second.

Future of AI in Finance

The future of AI in finance is poised to deliver even greater value to financial modeling, forecasting, and decision-making. As AI tools continue to evolve, finance teams can expect advanced capabilities such as scenario planning, collaborative planning, and real-time insights to become standard. These innovations will enable teams to model complex scenarios, work together seamlessly across departments, and access up-to-the-minute data for more informed decisions. Staying ahead of these trends will be essential for finance professionals who want to maximize the benefits of AI-powered modeling. By embracing new workflows and continuously adapting to technological advancements, finance teams can unlock new opportunities, drive better outcomes, and maintain a competitive edge in an increasingly data-driven world.

Final Verdict

For general office productivity, Microsoft Copilot is the clear winner. It integrates seamlessly into Outlook and PowerPoint and handles basic spreadsheet tasks well.

However, for financial modeling professionals—specifically in real estate—Apers is the definitive choice in 2026. It effectively bridges the gap between “AI automation” and “Excel rigor,” allowing analysts to cut build time by 80% without sacrificing the audit trail that LPs and CFOs demand. Notably, leading financial institutions are adopting these AI tools to enhance reliability and efficiency in financial modeling, further validating their impact across the industry.

Recommendation:

- For the Firm: Equip junior analysts with Apers to automate OM extraction and initial model builds.

- For the Desk: Keep ChatGPT open for ad-hoc market research and macro writing.

- For the Org: Use Copilot for summarizing meetings and emails.

Ready to stop manually typing rent rolls? Try Apers for free and turn your next OM into a model in minutes.